As an app marketer, I am usually tasked with CRO for all the companies I work with. I often think about this question: When is it time for CRO? And when is doing CRO the most impactful? Having been able to market app products for both startups and mature-stage companies, I really think there is some nuance to this topic; CRO has its strengths and weaknesses, and I’m writing this post to explore this topic further.

My Thesis: For startups on the verge of scaling or on the train to scale, CRO (or, in app marketing, ASO) work here is unnecessary.

Standing behind my thesis: During aggressive scaling, when your VC (or Family?) is pouring millions into your bank to drive that growth, and everybody from Techcrunch to the niche influencers is talking about the ‘next thing (your app)’, your audience is changing faster than your tests can measure. The data you’re collecting is probably not complete, and the decisions you’re making based on it are locking you into a local maximum. If you’re iterating on your hypothesis & storefront creatives on this basis, you’re spinning your wheels to achieve relative conversion and burning resources in the process.

Chapter 1: The Seeded Scalers – CRO Need Not Apply.

When you have the magic of money blowing your sail, your app is not compatible with CRO. In fact, thinking that you should increase that decreasing 10% CVR that you see on your dashboard is, in my opinion, an effort in wasteful resources.

Why?

Your traffic composition will likely change or be in a constant state of flux. Your app is out there with a strong marketing budget from your friendly VC; they want to see your graphs go up; you spend everything in your power to grab the users you think your app is most compatible with, and you grab them by the bucketful.

When you’re doing this, you are playing with multiple addressable markets to find the product’s fit; naturally, the audience composition will likely change by week or even by day. It’s not hard to imagine that if you run a CRO experiment here to increase your conversion, you’re likely running a test on a potentially unstable audience, which will give you false results.

The solution: In this chapter of your growth stage, your solution is a full A/Z test, not “color background” A/B testing. A full structural redesign of how you present your app product (or maybe just the product in general) will fit much better here. You don’t have stable traffic yet to reach statistical significance to even determine whether your hypothesis is grounded in the right audience; for the early scaling stage, it’s best to test the full structure presentation rather than incremental work.

What is A/Z? It’s basically instead of designing your app store based on a hypothesis like “I think blue is better than red background for CVR,” You say “I think my feature thesis where users will want to use my step counter feature will more likely convert in my fitness tracking app so I’m going to focus my design on this feature, and test it agaisnt a more general feature focused design.”

Chapter 2: Shower and Grower – The Potential Trap.

Like most sophomores; The middle stage of scaling and maturity is awkward. I found Merriam-Webster’s definition of sophomore is a Greek root word that combines the wisdom of sophistēs with the Greek word mōros, meaning “foolish.” I think this really succinctly describes this stage of the business well. You are the combination of wisdom and foolishness at the same time.

At this stage, scaling economics still dominate. BUT, as CAC or CPI starts to creep up, the signals become less and less clear about what you should do. A common question I think most teams will ask: (Example below would be for a subscription-style app product)

“If installs are up but subscriptions and retention are down, what would you investigate and how would you partner cross-functionally?”

The question is sound; what would you investigate at this point? You’re still scaling, but you can’t ignore the fact that it’s getting harder and harder. This is where most teams try to solve by doing “ASO”. Misdiagnosing the problem completely, trying to do infinite ASO A/B testing until “they get there.” This is the trap, and the trap is you’ll be spinning your wheels over and over again and again wasting creative resources and manpower doing A/B on a still scaling default page app page, which gives you false positive or relative conversion rate lift that isn’t moving the needle.

Now, to be fair, what we have as an A/B testing kit in the platforms right now (App Connect + Google Play Console) is far from the level of sophistication we need to diagnose and remedy the problem. Which is where most marketing teams will always default to “OH my CAC/CPI is going up, we need someone to do App Storefront CRO TODAY!!!!” And generally, this is true, but sometimes it’s not.

In my opinion, for app marketing/ASO work, this is where you abandon default page A/B testing and go for cohort testing.

Before we get into this point, Cohort testing requires your product to be highly instrumented and your marketing team to be fully aware of the demographics they are targeting. Meaning this is where both Marketing AND Product must work hand in hand to create a sufficient framework for this test.

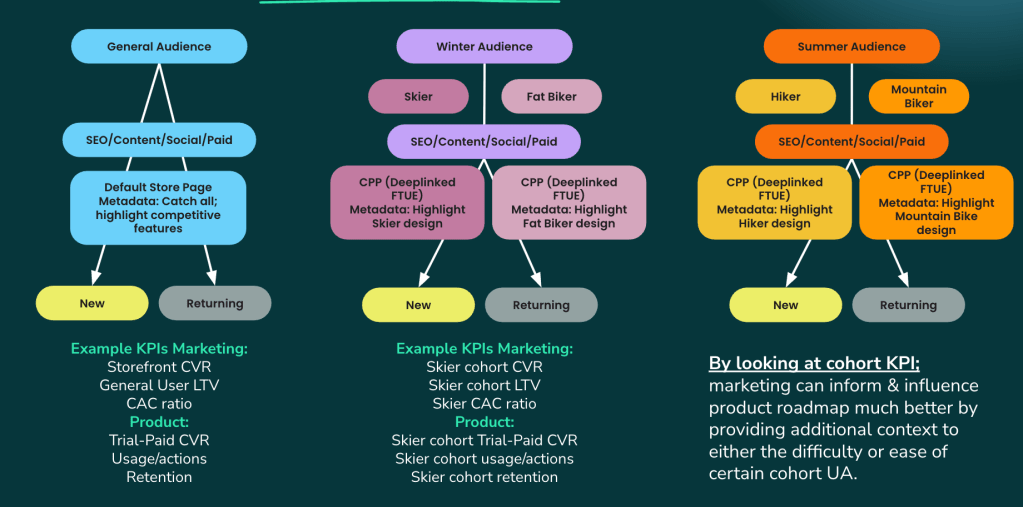

But the basic sample of a cohort test is as follows. Your general audience is at the top level; for ASO, this is your default page. Now, split the default page into multiple segments using CPP/CSL, and route your top marketing funnel through these CPP/CSLs. Test the Pre/Post CVR of each of these user types. A framework visual I drew up, like the one below:

Now, if your product team is as sophisticated as you are on the marketing side, they can track users coming from these CPP/CSL and measure the impact on these cohorts’ KPIs.

Be warned: this is a very broad, general opinion; your data is specific to your app. Don’t follow this blindly, because it lacks the nuance of what’s going on for you.

Chapter 3: Product Maturity – CRO Take Center Stage.

This is where CAC/CPI becomes crazy, and the general sentiment is that CRO/ASO organic work is necessary. Generally speaking, this is where you begin to do CRO work because any “incremental” advances you make save you so much money, because increasing conversion download equals more downloads, thereby lowering your CAC/CPI, and this totally makes sense, right?

Yes, it does, and I won’t argue with this logic. In fact, this is where CRO work is most critical because it allows you to adapt as user behavior shifts. CRO work is basically about catching up with or maintaining the trend of how users want to interact with your app, whether they are new or returning.

But there’s one more scenario that I’ve come across in the wild that rears its ugly head more often than I would like. Let me reframe this:

I wrote earlier about local maxima and the potential trap a team could fall into when they are scaling their app, i.e., testing on a lower hill rather than a larger one. But what happens when your app has been online since 2012? Does local maximum still apply?

The short answer, and this is from my personal experience working with brands that have been online since basically the dawn of the internet, there is such a thing as the “Maximum-Maximum” problem. This means your app has grown to the point that it can no longer expand into other markets, and you’ve capped its growth. Try as you may, your multi-million dollar marketing budget isn’t moving or shifting the needle the way that you want, and the C-Suite is breathing down your neck as to why the “graphs” aren’t moving in the right direction because they either have been sold that there is still growth by somebody, OR they really just didn’t realized that this is a thing. And the thing is, your product’s growth rate cannot ever be infinite.

The Action: When the above is true, it’s time to fundamentally revamp your product. This will lead to a revamp of your marketing funnel’s strategies and tactics.

Unfortunately, the “revamp your product” is ultimately a Product Manager’s quest to solve, but one that I’ve been in the supporting role for nearly half of my career as an App marketer; we unfortunately can’t all be the star of the show. 🥺

Remember the cohort framework I proposed earlier? I use that exact framework at this stage to help inform Product Managers where to take the product next. In this stage, PMs will usually research both the 1st and 2nd data point OR leverage Qualitative/Quantitative methods to create a new vision for the product, the “next hill.” Where the framework I propose comes into play is to provide additional performance marketing context and forecast the level of difficulty of PM’s new vision for the product.

In simple terms, I basically tell them whether their vision is doable. How hard is it to capture this “new type of user,” or does the market even expect this type of “new feature” you are proposing from this brand/app? Using live performance data I normally have access to, I squeeze myself into those product conversations even when they don’t want me, because the alternative is them spending millions on a feature that falls flat. Then, the product sends you a message saying, “Why didn’t your marketing campaign attract the right users?” And around the blame game goes.

Truly, though, the worst feeling in the world is when Product unveils a new feature or product, and you know from what you see/do in the market that this new feature/product is going to flop. At this stage of the business, marketers need to be much more proactive and reel in the Product team by providing critical forecast data. Something that apparently most teams don’t think about until it’s too late.

Basically, in this stage of the game, work with the Product team, or ELSE!

Final Note:

There is no simple answer in business; I don’t expect there to be a clean answer that everyone can apply to every single scenario. Solutions should be bespoke, and my experience isn’t applicable for every single portfolio out there, but I hope, at the very least, my thought stream kicks the noodle around for you, if only to give you some ideas.